Despite InfoSec and compliance teams' concerns, employees and CEOs both realize the productivity benefits of using ChatGPT at work and are pushing hard to adopt it. In the wake of this technological shift, we're seeing corporate clash over AI usage policies and a rise in shadow IT use of ChatGPT ("Shadow AI").

At Blueteam AI, it's our mission to enable safe and secure adoption of ChatGPT in the enterprise. We're building a suite of tools to help, and along the way we've interviewed dozens of professionals from InfoSec and compliance teams. Here are some of the common pain points we've heard and how we've addressed them in Blueteam AI's network monitoring.

- What are we not aware of?

- Can we shine a light on the shadow AI in our organization?

- What departments and teams are using it?

- What are the opportunities and risks?

- Where is generative AI useful?

- Am I leaking sensitive information?

- How can I protect myself?

- How do I enforce DLP policies on AI usage?

- How can I monitor for policy violations?

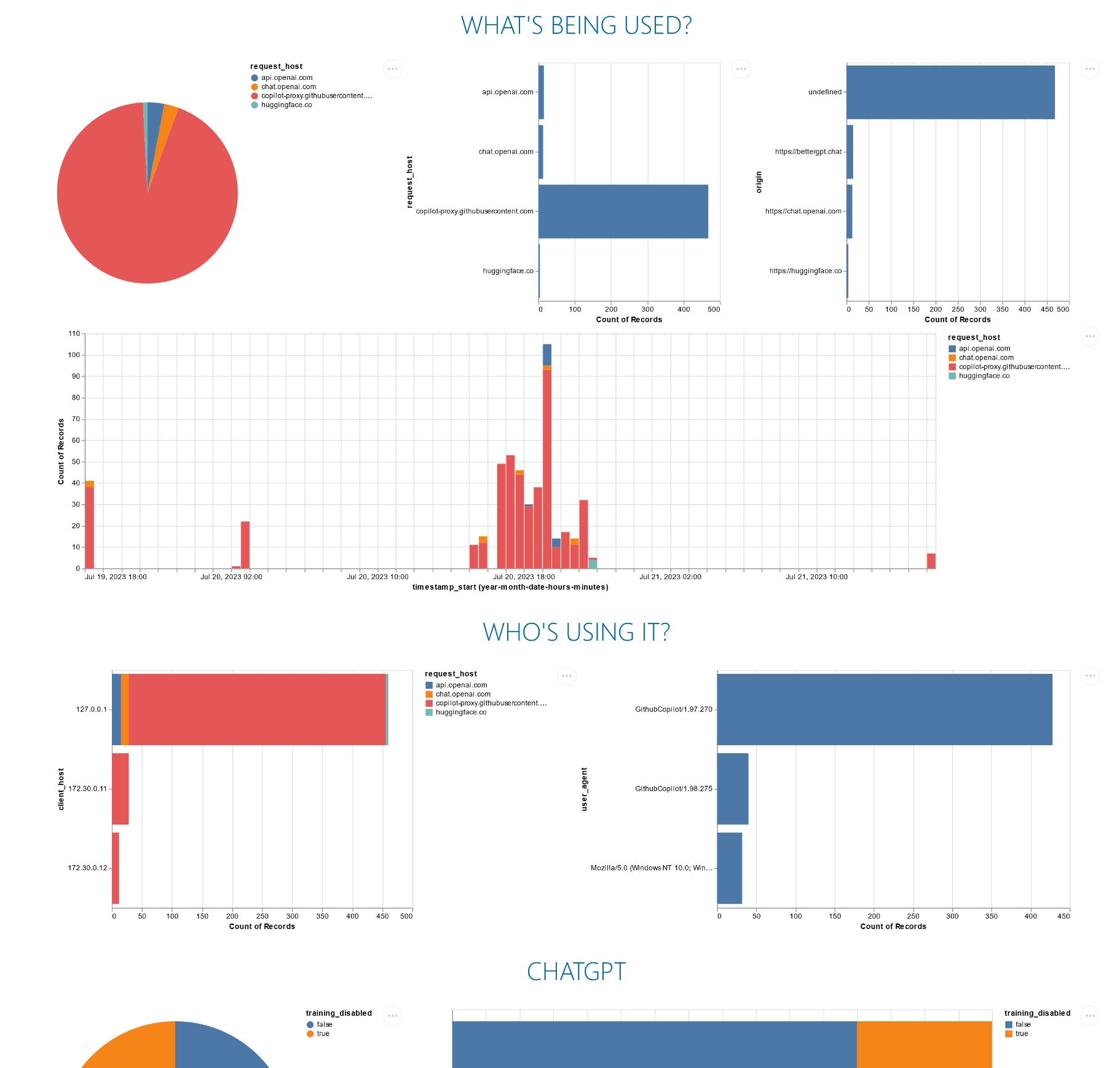

All charts in this post are screenshots from our upcoming AI network monitoring product. To learn more, sign up for our mailing list.

What are we not aware of?

Can we shine a light on the shadow AI in our organization?

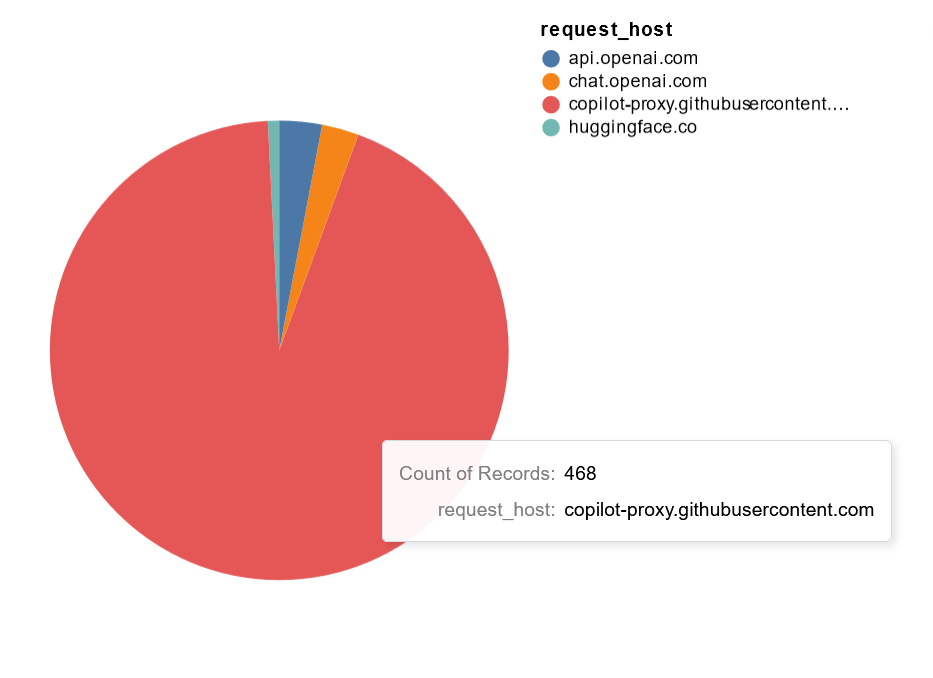

To address security concerns, we've heard a number of companies considering on-premise deployments of large language models (LLMs). However, this approach does not address the shadow AI problem where employees choose to use unsanctioned AI services like Github Copilot and ChatGPT.

By analyzing outbound network traffic to AI services, we can identify AI usage even if it is not explicitly approved by central IT.

What departments and teams are using it?

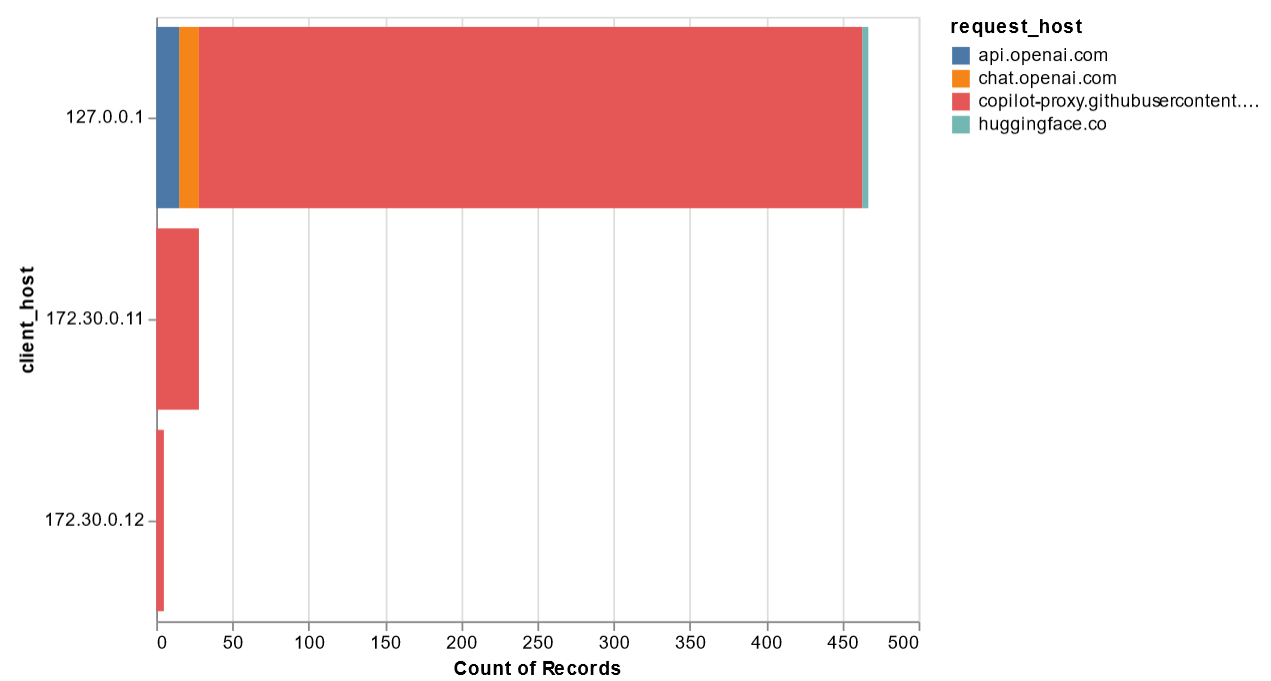

We've heard CEOs issue mandates to integrate AI into department workflows, but how can we quantify the adoption of AI across the organization? Segmenting the traffic by hosts enables us to identify which individuals / teams / departments are using AI at work and how much they are using it.

What are the opportunities and risks?

Where is generative AI useful?

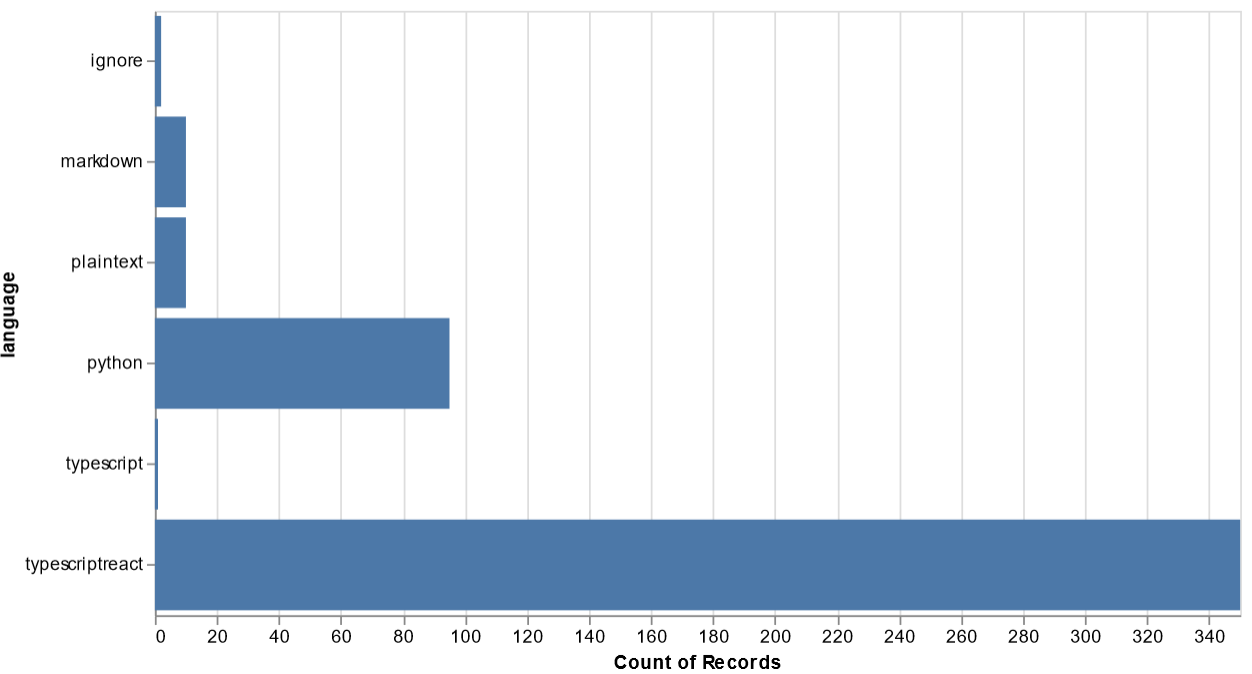

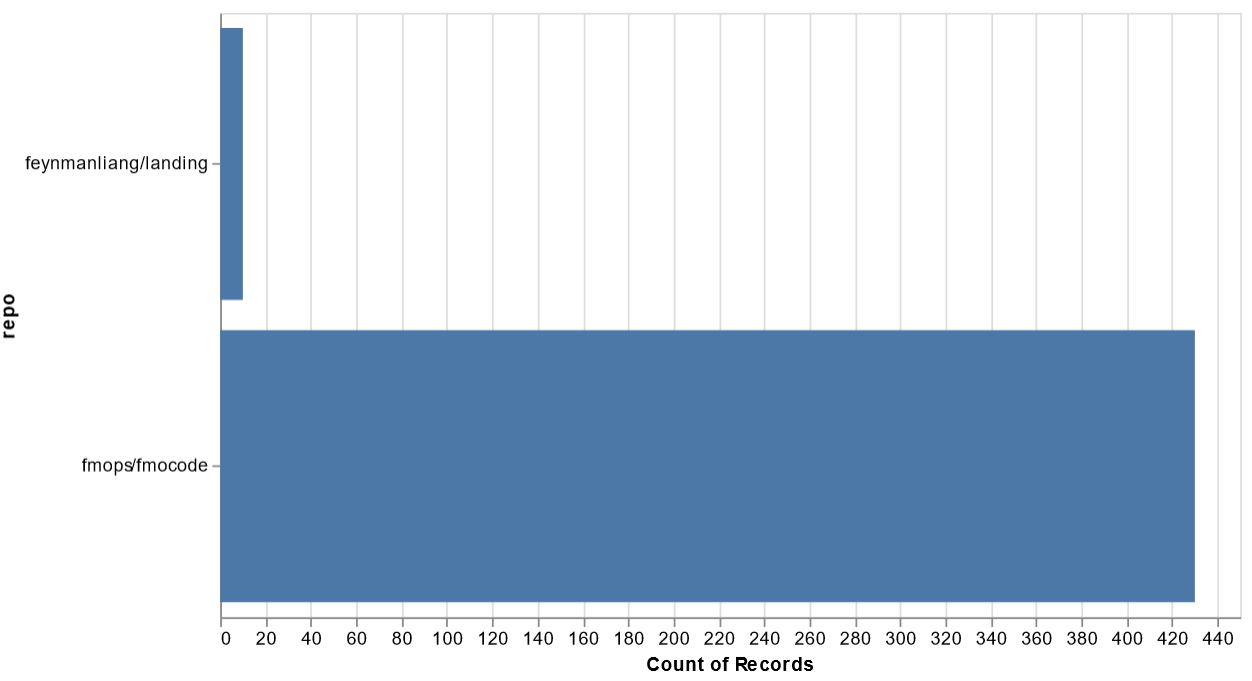

We've heard that developers are using Github Copilot to write code, but where can we expect to see the most productivity gains? Does it benefit some roles more more others?

And in order for management to effectively rebalance headcount after AI adoption, we need to know how AI improves productivity across different team's code repositories.

Am I leaking sensitive information?

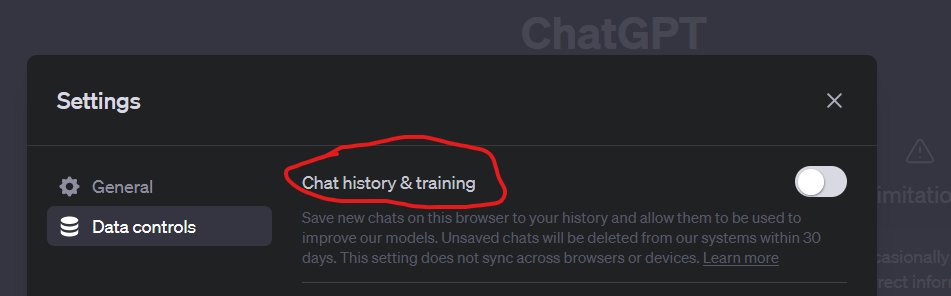

It's no secret that conversations with ChatGPT are used to train the model. While ChatGPT has an option to disable training data collection:

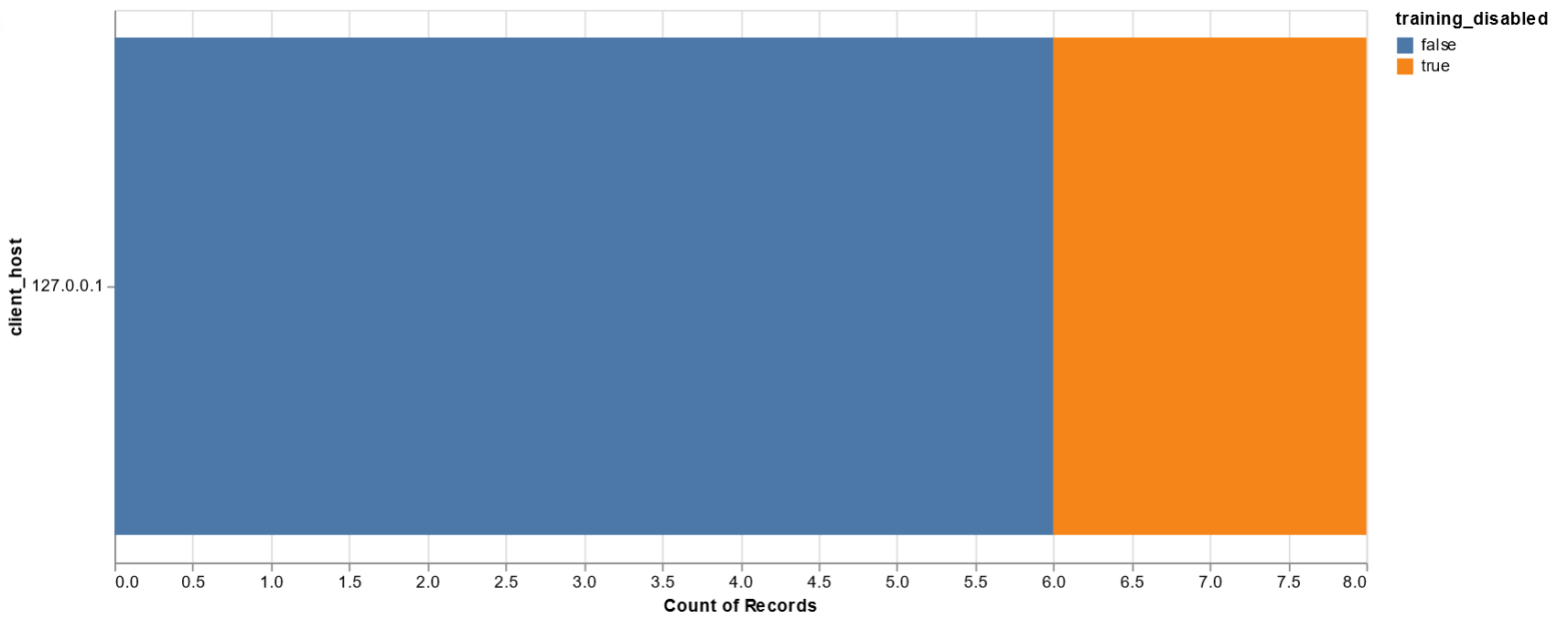

It's up to individual employees to remember to enable this setting. How compliant are my employees, and where are remediations necessary?

How can I protect myself?

By combining our AI network monitoring with our API middleware stack, we can go beyond traffic analysis and take action to enforce data loss prevention (DLP) and threat protection policies on generative AI usage.

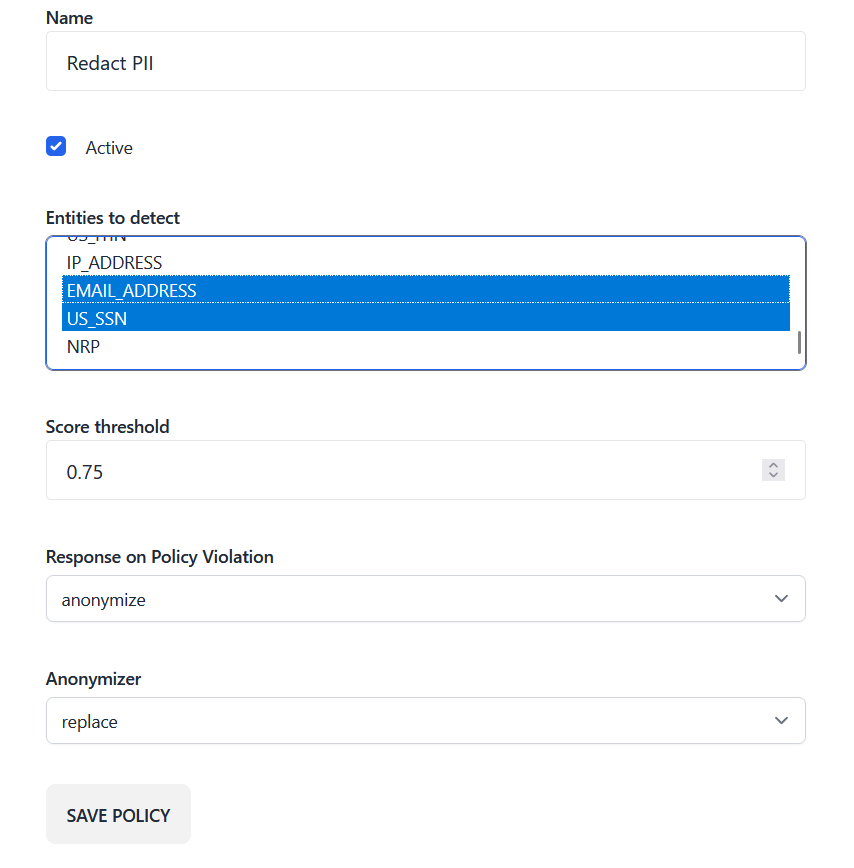

How do I enforce DLP policies on AI usage?

Configure a DLP policy to detect the presence of sensitive data like personally identifiable information (PII) and take actions to nudge the user, block the request, or automatically redact the data from the request.

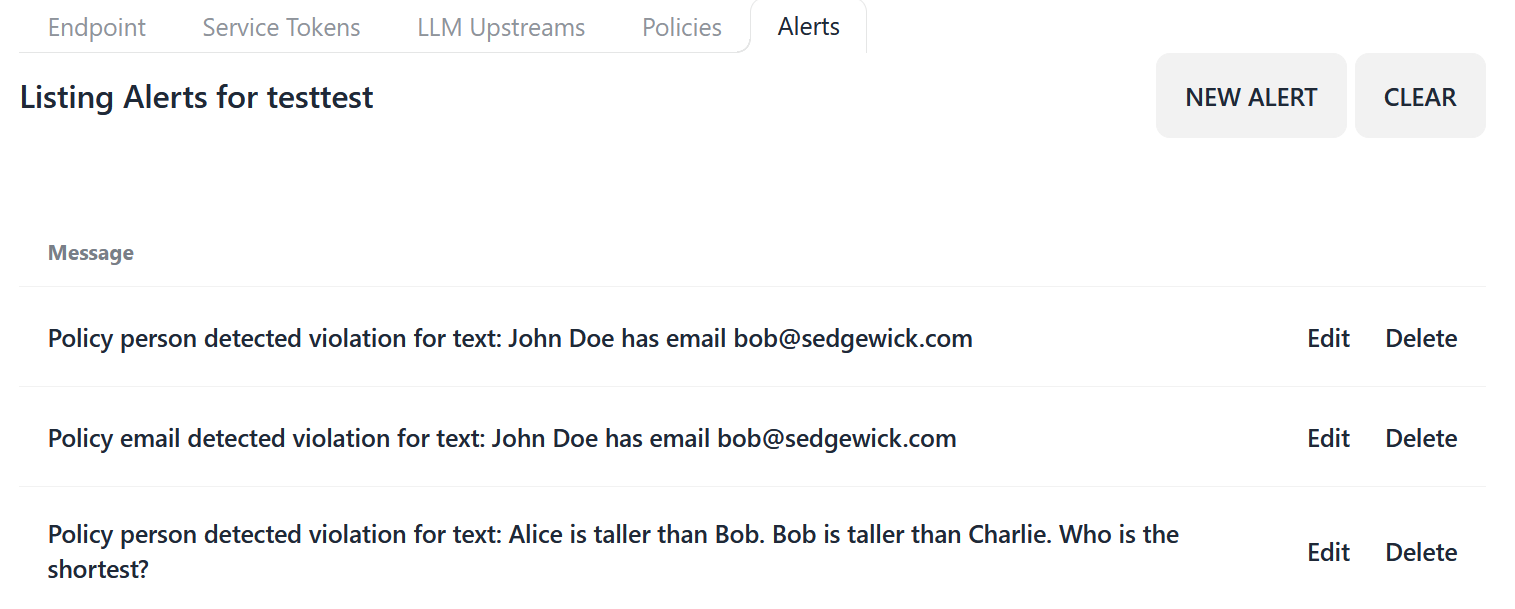

How can I monitor for policy violations?

As AI usage becomes more prevalent, it's important to monitor for policy violations so you can receive timely alerts and proactively address them before they become a problem.

Summary

At Blueteam AI, we recognize the growing interest in leveraging ChatGPT's capabilities in the workplace. We've observed a significant push from employees and CEOs alike, driven by the notable productivity benefits it brings. However, this technological shift has also given rise to concerns among InfoSec and compliance teams, leading to corporate conflicts over AI usage policies and an increase in shadow IT usage of ChatGPT. As part of our commitment to enabling a safe and secure adoption of ChatGPT in the enterprise, we have developed an AI network monitoring product. By deploying this solution, you can gain enhanced visibility and control over AI usage within your corporate networks. Our product allows you to identify unauthorized AI usage, track its adoption across different departments and teams, and uniformly enforce security and DLP policies in order to ensure a seamless integration of ChatGPT while maintaining compliance and security standards.

AI network monitoring with Blueteam AI

Blueteam's AI network monitoring is a network appliance that performs deep packet inspection of network traffic to AI services in order to gain visibility and control over AI usage on corporate networks.

Tired of saying no? Learn how to adopt a "yes and" approach to AI usage in the enterprise by signing up for our mailing list.